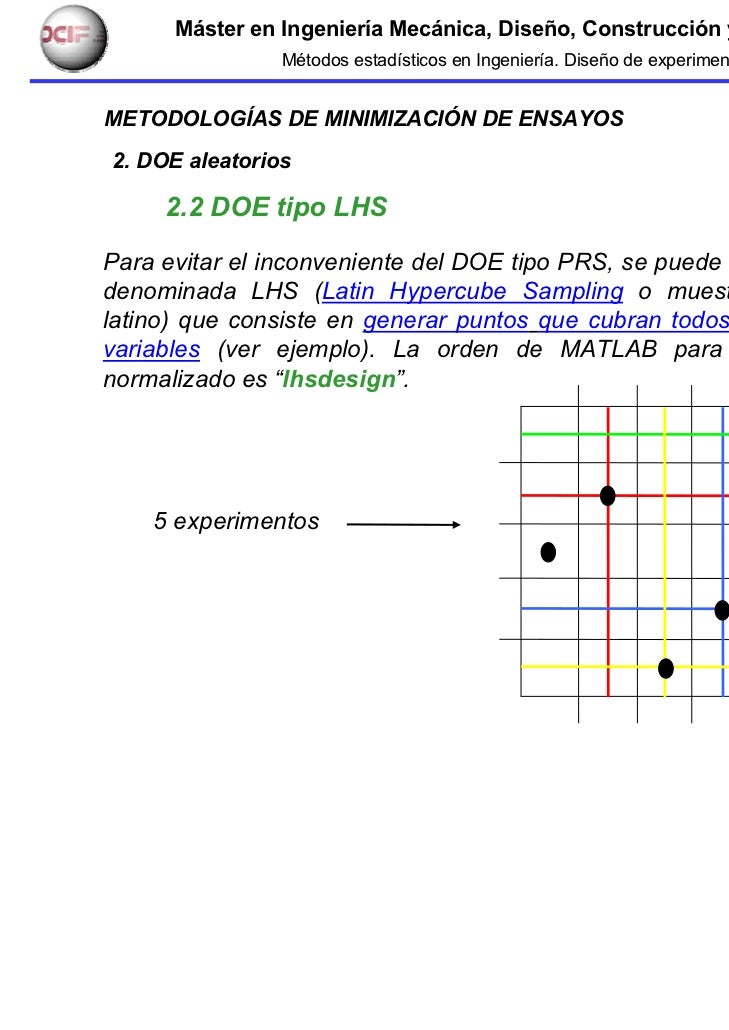

Return(randtoolbox::sobol(n, dim = 2, scrambling = 3, seed = seed)) A comparison below shows how each of three looks like in the 2-dimension data space. On the other hand, LHS covers the data space more evenly in a way similar to the Quasi Random, such as Sobol Sequence. LHS is similar to the Uniform Random in the sense that the Uniform Random number is drawn within each equal-space interval. For the N-dimension LHS with N > 1, we just need to independently repeat the 1-dimension LHS for N times and then randomly combine these sequences into a list of N-tuples. We first partition the whole data space into 10 equal intervals and then randomly select a data point from each interval. Let’s assume that we’d like to perform LHS for 10 data points in the 1-dimension data space. Latin Hypercube Sampling (LHS) is another interesting way to generate near-random sequences with a very simple idea.

In my previous post, I’ve shown the difference between the uniform pseudo random and the quasi random number generators in the hyper-parameter optimization of machine learning.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed